A single engine has achieved up to 2.81x speedup for DELTA_LENGTH_BYTE_ARRAY encoded strings and 2.79x speedup for DELTA_BINARY_PACKED integers when compared to CPU-only Parquet reading implementations, attaining a throughput between 2.3 GB/s and 7.2 GB/s (limited by the interface bandwidth of the testing system) depending on the input data. Multiple Parquet files of varying types and encodings were generated in order to measure the performance of the ParquetReaders. This area efficiency allows for instantiating a large number of (possibly different) ParquetReaders for parallel workloads. The ParquetReader has great area efficiency, with a single ParquetReader only requiring between 1.18% and 2.79% of LUTs, between 1.27% and 2.92% of registers and between 2.13% and 4.47% of BRAM depending on the targeted input data type and encoding. A modular and expandable hardware architecture called the ParquetReader with out-of-the-box support for DELTA_BINARY_PACKED and DELTA_LENGTH_BYTE_ARRAY encodings is proposed and implemented on an Amazon EC2 F1 instance with an XCVU9P FPGA. To that end, an FPGA-based Apache Parquet reading engine is developed that utilizes existing FPGA and memory interfacing hardware to write data to memory in Apache Arrow's in-memory format. In order to better utilize the increasing I/O bandwidth, this work proposes a hardware accelerated approach to converting storage-focused file formats to in-memory data structures. Instead, CPUs are no longer able to decompress and deserialize the data stored in storage-focused file formats fast enough to keep up with the speed at which compressed data is read from storage. With the advent of high-bandwidth non-volatile storage devices, the classical assumption that database analytics applications are bottlenecked by CPUs having to wait for slow I/O devices is being flipped around. Van Leeuwen, Lars (TU Delft Electrical Engineering, Mathematics and Computer Science TU Delft Quantum & Computer Engineering) You need to update all the hadoop JARs and their transitive dependencies.High-Throughput Big Data Analytics Through Accelerated Parquet to Arrow Conversion Helps you work out what's going on.ĭo not try to drop the Hadoop 2.8+ hadoop-aws JAR on the classpath along with the rest of the hadoop-2.7 JAR set and expect to see any speedup. Note: Hadoop 2.8+ also has a nice little feature: if you call toString() on an S3A filesystem client in a log statement, it prints out all the filesystem IO stats, including how much data was discarded in seeks, aborted TCP connections &c. If you need it you need to update hadoop*.jar and dependencies, or build Spark up from scratch against Hadoop 2.8 csv.gz.Īlso, you don't get the speedup on Hadoop 2.7's hadoop-aws JAR. The random IO operation affects on bulk loading of things like.

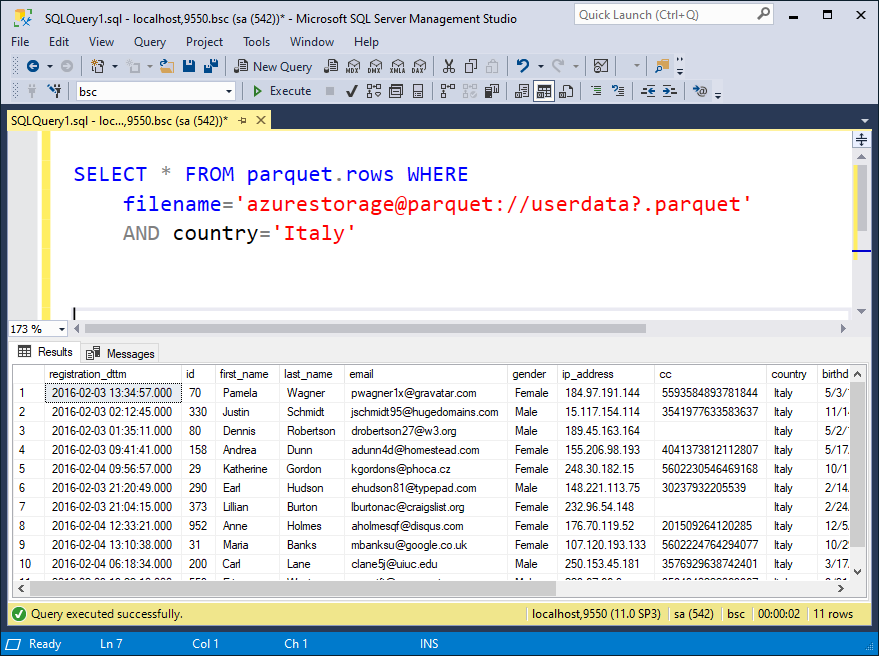

Hadoop 2.7 and earlier handle the aggressive seek() round the file badly, because they always initiate a GET offset-end-of-file, get surprised by the next seek, have to abort that connection, reopen a new TCP/HTTPS 1.1 connection (slow, CPU heavy), do it again, repeatedly. In Apache Hadoop, you need hadoop 2.8 on the classpath and set the properly .=random to trigger random access. Let me break down some facts that will clear your confusion:ĭoes the Parquet code get the predicates from spark? Yesĭoes parquet then attempt to selectively read only those columns, using the Hadoop FileSystem seek() + read() or readFully(position, buffer, length) calls? Yesĭoes the S3 connector translate these File Operations into efficient HTTP GET requests? In Amazon EMR: Yes. Spark 2.x comes with a vectorized Parquet reader that does decompression and decoding in column batches, providing ~ 10x faster read performance. It also stores column metadata and statistics, which can be pushed down to filter columns. Parquet detects and encodes the similar or same data, using a technique that conserves resources. Using Parquet, data is arranged in a columnar fashion, where it puts related values in close proximity to each other to optimize query performance, minimize I/O, and facilitate compression. While working with Spark Apache Parquet gives the fastest read performance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed